Multisensory View Management for Augmented Reality

Forschungsprojekt im Überblick

Fachbereiche und Institute

Förderungsart

Zeitraum

01.05.2017 to 30.04.2020

Projektbeschreibung

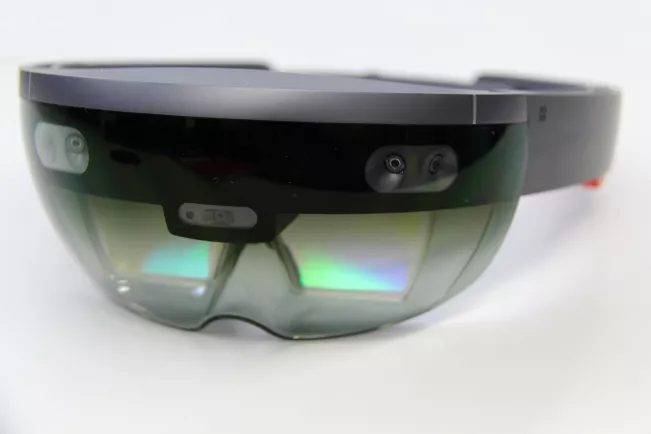

Augmented reality (AR) is a field of research that has seen a steep incline in attention over the last years. Recently, it has been driven by simple cell phone applications as well as the appearance of low-cost head-worn display devices such as Google Glass, and will likely see further uptake through Microsoft Hololens.

Nonetheless, the field of research itself has steadily been growing for well over a decade, driven primarily by research systems, but also by increasing industry interest. The basic premise of AR is the overlay of digital imagery over real-world footage. However, how we present information in an effective way, reflecting the potential and limitations of the human perceptual system, is still an open issue. To improve the usability and performance of AR systems and applications, perceptual issues must be approached systematically: there is a need to understand the mechanisms behind these problems, to derive requirements and subsequently find solutions to mitigate effects.

To focus our scope, in the proposed work we will predominantly look into advancing view management techniques, while mainly dealing with labels as main information visualization technique. Labels are the predominant mode of information communication in most AR applications and highly intertwined with view management. Labels generally hold text, numerical data or small graphical representations in a flag-like form that point towards the real-world object it refers to. The management thereof can be very challenging: for example, it may be required to order a larger number of labels that likely cause clutter and occlusion, which results in difficulties processing the provided information. Hence, without adequate view management techniques we will not be able to design effective interfaces especially when complexity rises. This is especially true once a narrow field-of-view (FOV) head-worn display device is used. These display devices are increasingly popular as high quality and affordable commercial versions become available and a higher uptake is expected. Yet, adequate view management methods specifically designed for narrow FOV are not available.

Aims and Objectives

In the project, we will advance the state of the art in the following areas:

- Create a benchmark system that supports the research effort – it is specifically intended to create a platform for performing validations und comparative conditions, both within the frame of the research program as well as by other researchers.

- Create a better understanding of perceptual and interrelated cognitive issues arising when using narrow FOV AR displays for exploring increasingly complex information, and comparing these findings to medium and wide FOV displays.

- Develop innovative view management techniques specifically tuned towards narrow FOV displays, hereby encompassing both (a) improvements to visual management of information represented through labels as well as (b) exploring the potential and developing view management methods based on auditory and tactile cues.

- Create guidelines to guide the design of novel interfaces.

Publikationen

Articles published by outlets with scientific quality assurance, book publications,

and works accepted for publication but not yet published

• Maiero, J., Kruijff, E., Ghinea, G., Hinkenjann, A. PicoZoom: A Context Sensitive

Multimodal Zooming Interface. In Proceedings of IEEE International Conference on

Multimedia and Expo, 2015.

• Kishishita, N., Kiyokawa, K., Kruijff, E., Orlosky, J., Mashita, T., Takemura, H. Analysing the

Effects of a Wide Field of View Augmented Reality Display on Search Performance in

Divided Attention Tasks. In Proceedings of the IEEE International Symposium on Mixed

and Augmented Reality (ISMAR'14), Munchen, Germany, 2014. ISMAR 2014

• Veas, E., Grasset, R., Kruijff, E., Schmalstieg, D. Extended overview techniques for outdoor

augmented reality. IEEE transactions on visualization and computer graphics, vol 18, no. 4,

p. 565-572, 2012.

• Veas, E., Kruijff, E. Handheld Devices for Mobile Augmented Reality. In Proceedings of the

9th ACM International Conference on Mobile and Ubiquitous Multimedia (MUM2010),

Limassol , Cyprus, 2010.

• Kruijff, E., Swan II, E., Feiner, S. Perceptual issues in Augmented Reality Revisited. In

Proceedings of the IEEE and ACM International Symposium on Mixed and Augmented

Reality (ISMAR'10), Seoul, South Korea, 2010.

• Veas, E., Mulloni, A., Kruijff, E., Regenbrecht, H., Schmalstieg, D. Techniques for View

Transition in Multi-Camera Outdoor Environments. In Proceedings of Graphics Interface

2010 (GI2010), Ottawa, Canada, 2010.

• Schall G., Mendez E., Kruijff E., Veas E., Junghanns S., Reitinger B., Schmalstieg D.

Handheld Augmented Reality for Underground Infrastructure Visualization. In Personal and

Ubiquitous Computing, Springer, vol. 13, p. 281-291, 2009.

• Veas, E. and E. Kruijff. Vesp'R - design and evaluation of a handheld AR device. In

Proceedings of the IEEE and ACM International Symposium on Mixed and Augmented

Reality (ISMAR'08), p. 43-52, Cambridge, United Kingdom, 2008.

• Kruijff, E. Wesche, G., Riege, K., Goebbels, G., Kunstman, M., Schmalstieg, D. Tactylus, a

Pen-Input Device exploring Audiotactile Sensory Binding. In Proceedings of the ACM

Symposium on Virtual Reality Software and Technology (VRST'06), p. 316-319, Limassol,

Cyprus, 2006.

• Bowman, D. Kruijff, E. LaViola, J., Poupyrev, I. 3D User Interfaces: Theory and Practice.

Addison-Wesley, 2005.